The on-premiseagentic platform.

huoku aggregates scattered company knowledge into a single index, runs configurable agents on top of it, and keeps the whole stack inside your own Kubernetes cluster. One Helm chart. Production-grade security. No external dependencies.

How huoku works.

Both halves of the platform work as one: a knowledge pipeline that turns your sources into a searchable index, and agents that use that index to reason and call tools.

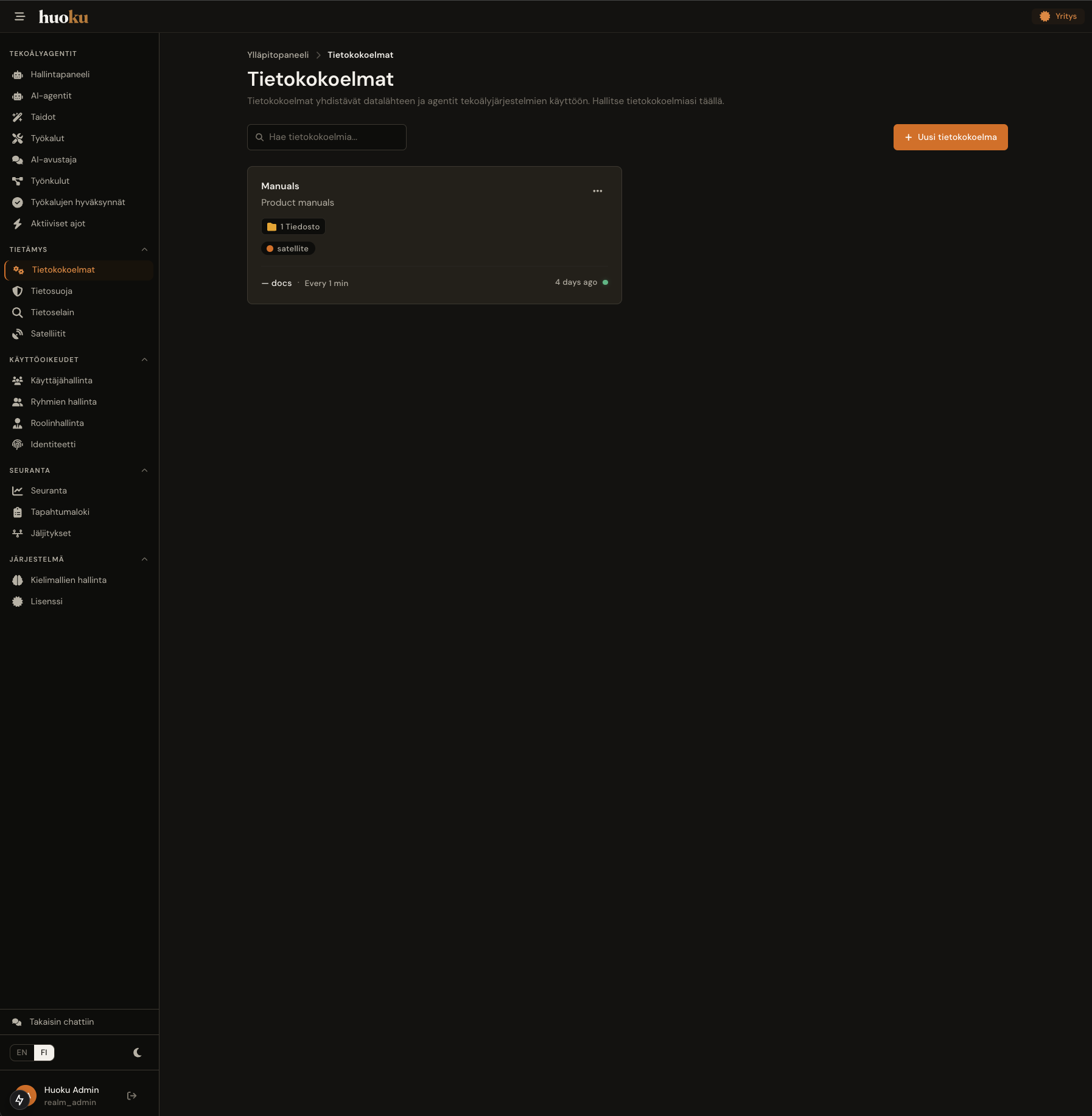

From sources to index

Satellite crawlers watch your connected systems for new, updated, and deleted documents. Content is parsed, chunked intelligently, run through DataGuard for PII masking, embedded into vectors, and indexed in OpenSearch.

From index to action

Agents don't just retrieve information — they call APIs, combine sources, and execute workflows in the background. Managed from a single panel.

- 1A trigger initiates the workflow — incoming email, API call, Kafka event, webhook, or a schedule.

- 2The agent activates, accesses the knowledge base, and reasons about what to do.

- 3The agent executes skills (multi-step workflows) and uses tools (actions via connectors).

- 4It can delegate to sub-agents for specialized tasks.

- 5Actions are delivered — email sent, API called, event published, webhook fired.

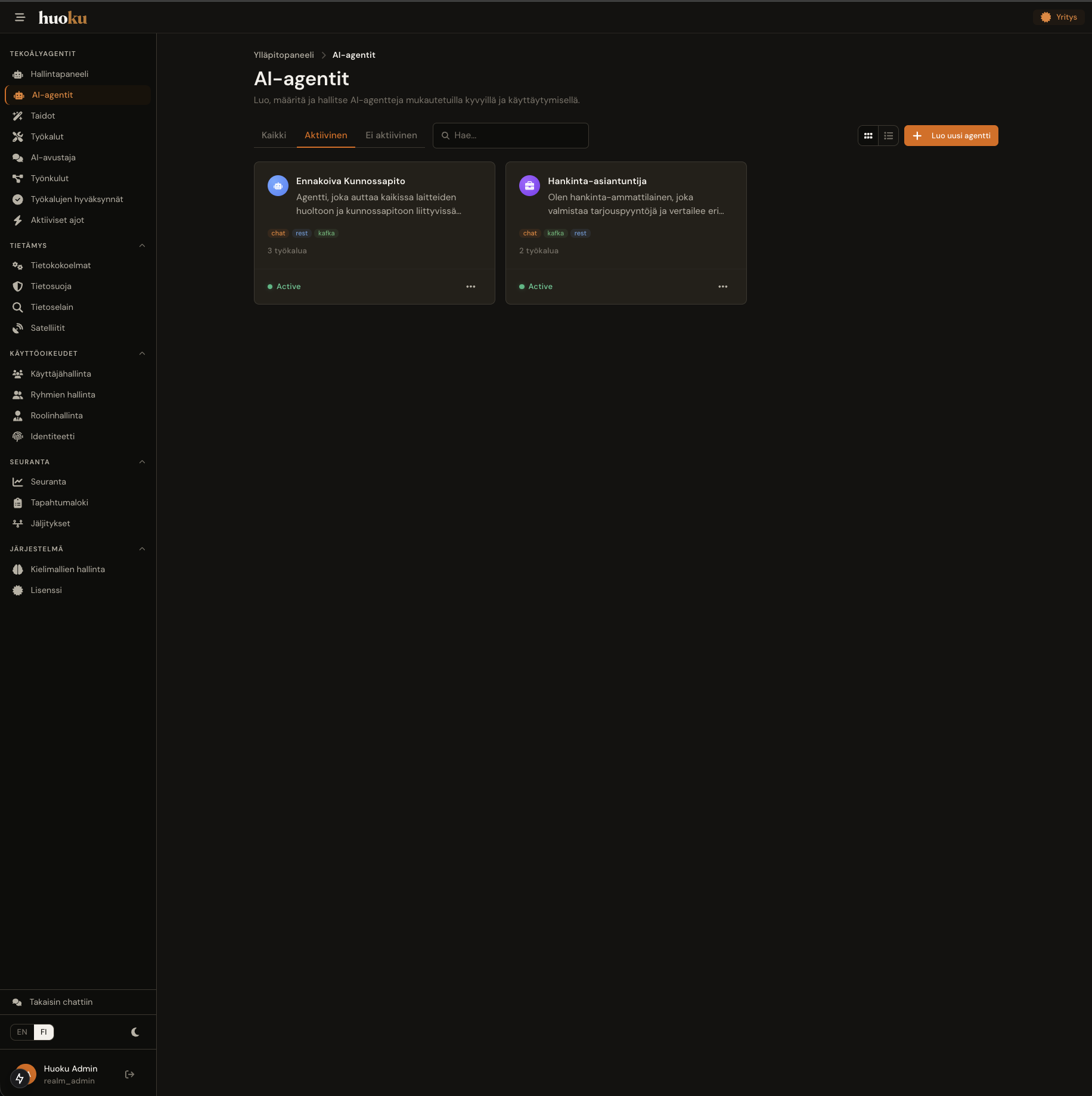

Your agents. Your rules.

Every agent in huoku is independently configurable. Admins create and manage agents through a visual editor — no coding, no DevOps tickets.

- System promptBehavior instructions and persona, scoped per agent.

- Knowledge accessWhich knowledge collections the agent can see.

- SkillsMulti-step workflows the agent can execute.

- ToolsConcrete actions — email, REST, Kafka, webhook.

- TriggersWhat activates the agent: schedule, event, query.

- LLM modelModel and temperature per agent, switchable at runtime.

- Sub-agentsDelegate specialized tasks to specialized agents.

- IdentityCustom name, icon, color for every agent.

- Welcome promptsLocalized greetings and example prompts.

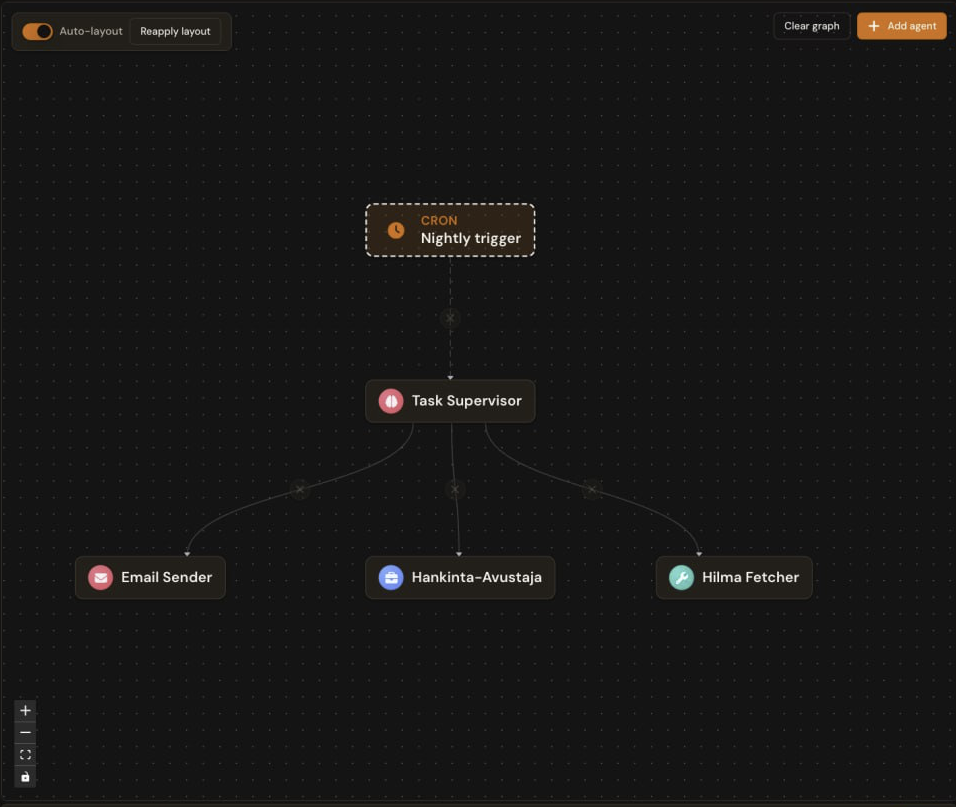

Agents that don't wait to be asked.

The real leverage of an agent platform isn't answering one question well — it's running agents that do their own work on a schedule, react to events, and deliver results before anyone notices the problem. huoku's workflow engine turns any agent into a 24/7 worker.

Daily sick-leave report

Aggregates absences from HR systems, flags patterns, drops a summary in Teams every morning.

Check machine metrics, alert if needed

Reads OPC-UA streams against the threshold playbook, opens a work order when an anomaly crosses confidence 0.9.

Watch new Hilma announcements

Pulls the latest public-tender notices, filters by relevance, drafts a go/no-go memo for the procurement lead.

Trigger

Schedule (cron-like), Kafka event, webhook, REST call, or on-demand from chat. The strip says how it starts.

Agent

Any configured agent can be wired to a workflow — reusing the same knowledge access, tools, and identity.

Owner

Every workflow runs under an identity. Permissions and audit logs follow the owner — not a service account.

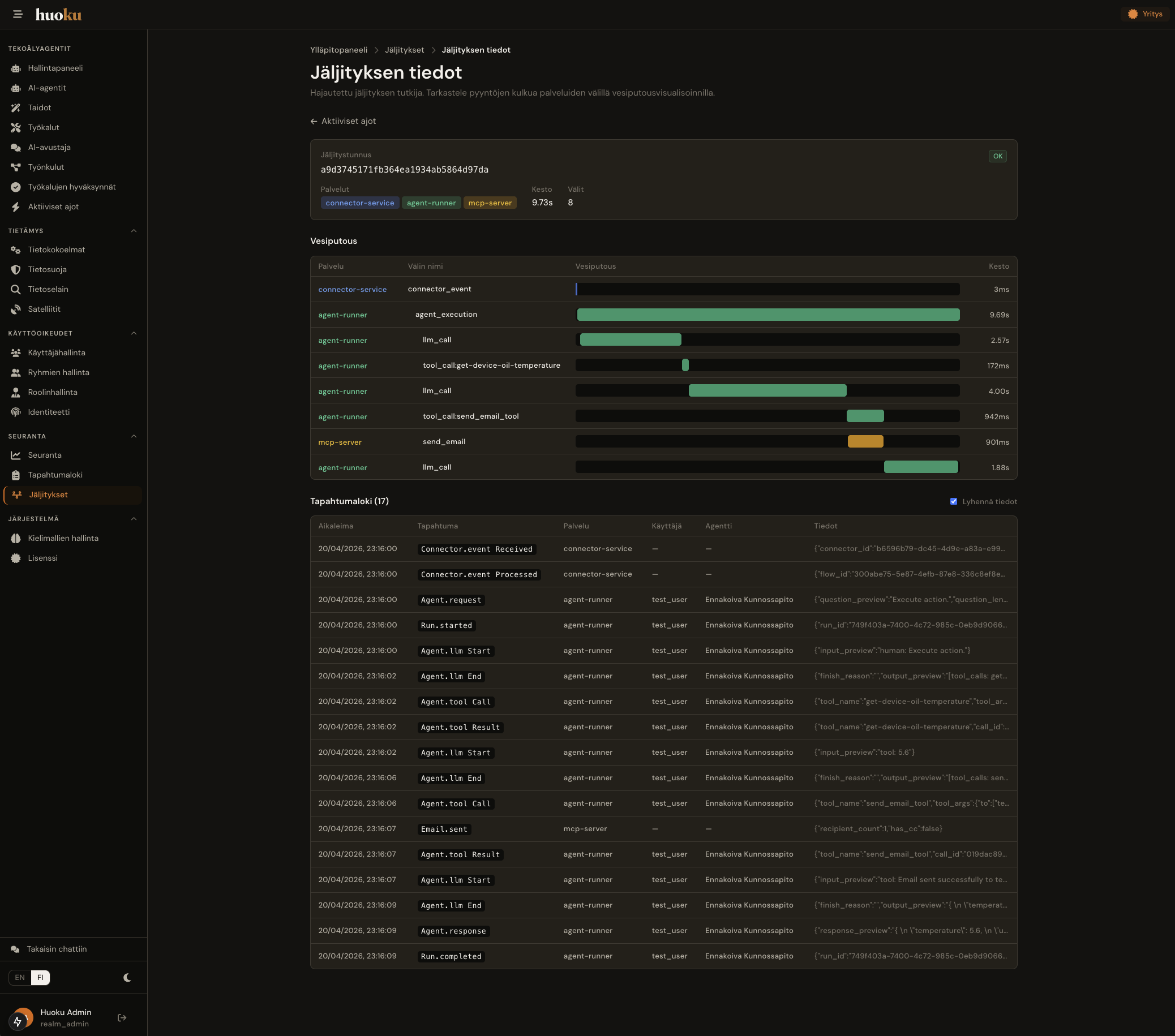

All runs land in monitoring with full traces. If a step fails, you see exactly where — and which retry policy fired.

Connects to everything. Depends on nothing.

The same agent answers an employee in chat, responds to a REST call from an integration, and listens on Kafka for real-time workflows. One management plane, many channels.

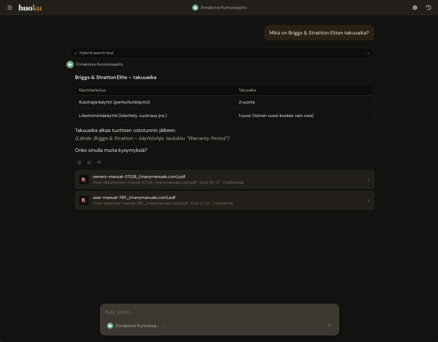

Chat interface

Browser-based chat for employees. Conversation history, source citations with PDF page numbers, agent-specific welcome prompts. Each user sees only the data they're authorized to.

- Source citations on every answer

- Per-user conversation history

- Agent-specific welcome messages and example prompts

- User permissions inherited down to chunk level

Protocols and APIs

Programmatic access for custom integrations.

Event-driven integration for real-time workflows.

Exposes knowledge to huoku's own agents — and optionally to external AI assistants.

Agent-to-agent interoperability for multi-agent systems.

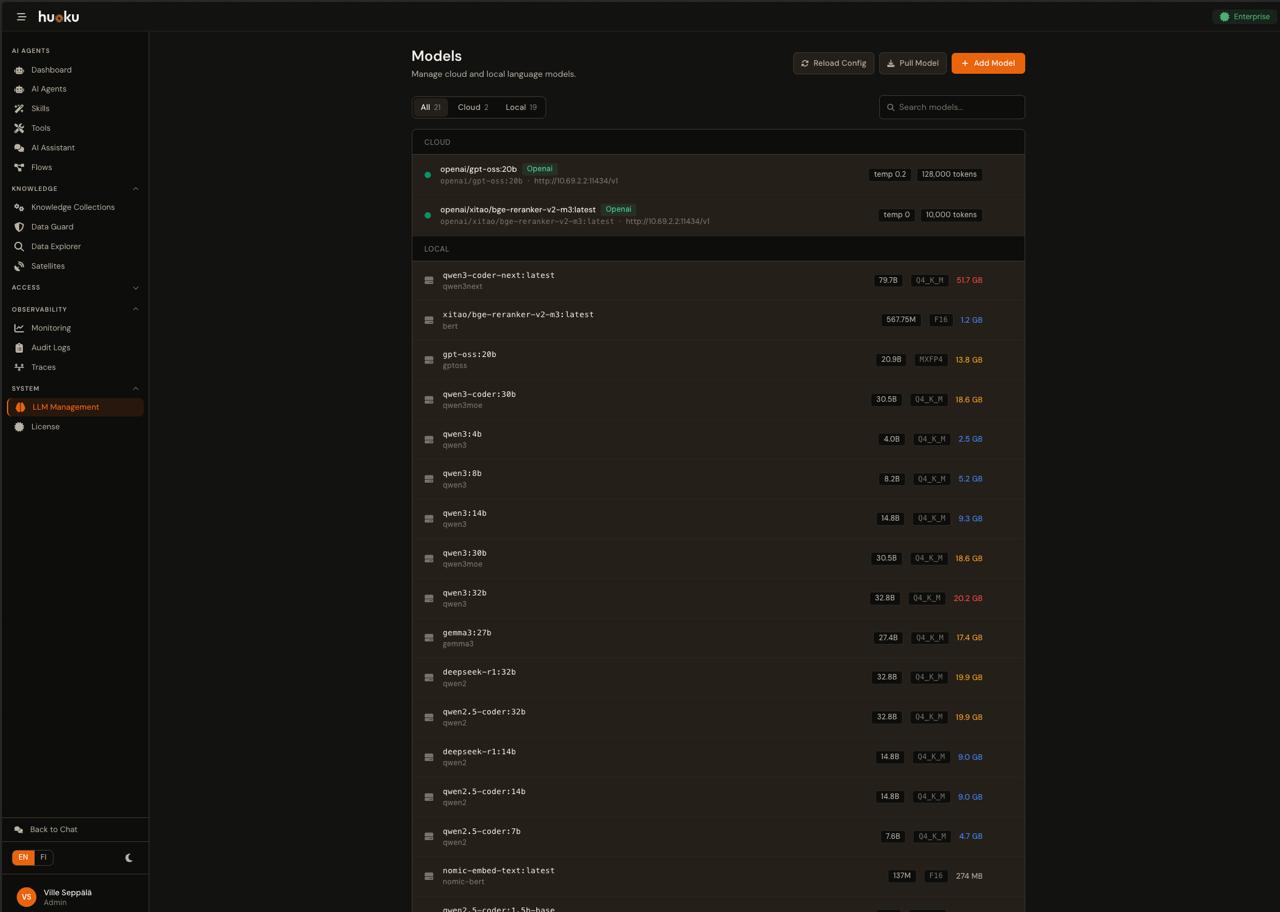

One platform, any model.

Cloud providers for top-tier performance, local models via Ollama and vLLM for air-gapped environments. Models are switchable at runtime — no restarts required.

- OpenAI (GPT-4 and others)

- Anthropic (Claude)

- Azure OpenAI

- Ollama (fully local, air-gapped)

- vLLM (high-performance local inference)

Security is not a feature. It's the architecture.

Every chunk, query, and answer carries an identity. Permissions follow the data from source system to final answer — without unbundling or duplicating your existing access model.

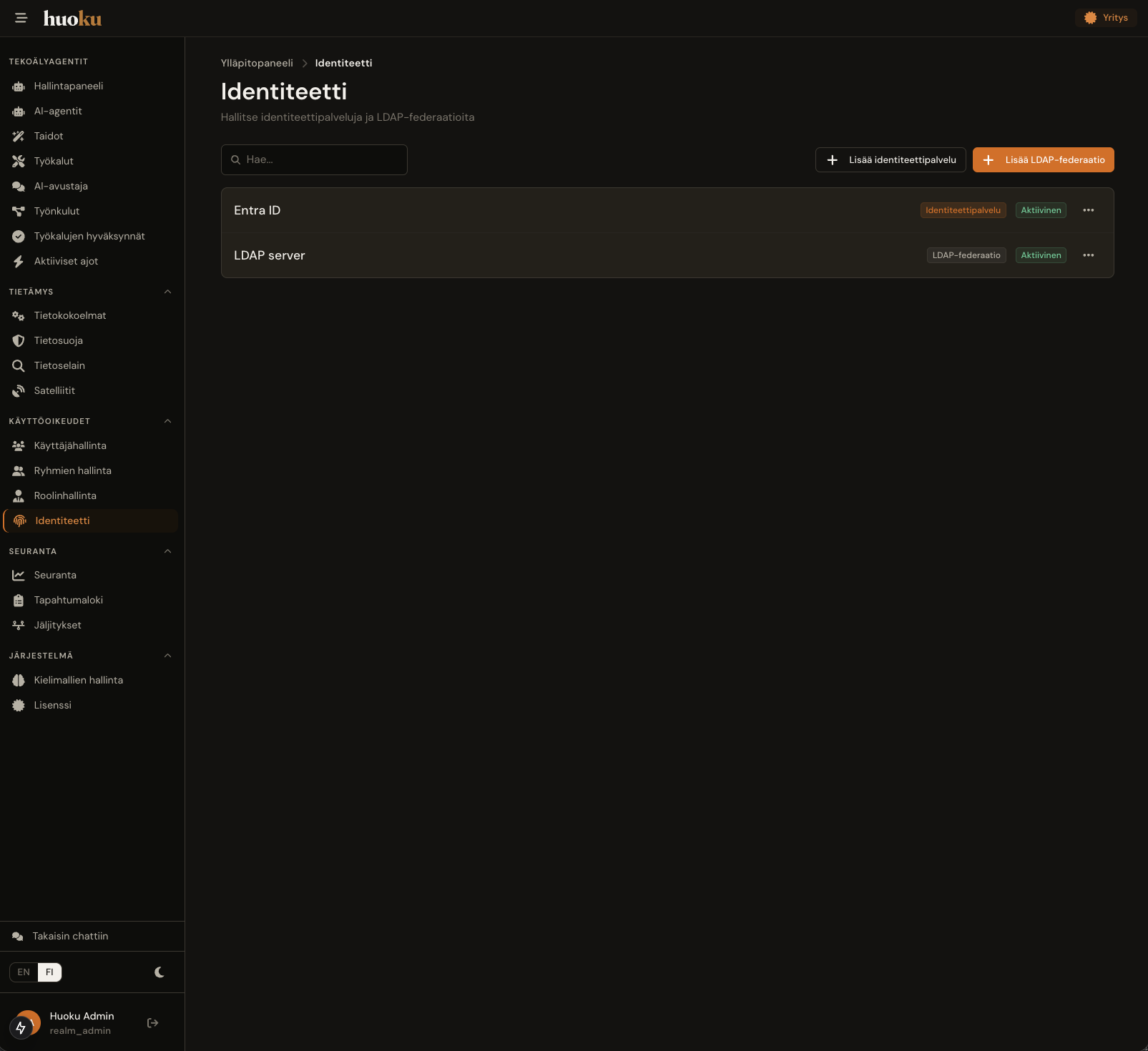

Identity and access

- Keycloak OIDC with PKCE

- Role-based access control — Admin, User, Viewer

- Document-level security — results filtered by user permissions

- Agent-level permissions — each agent's knowledge and tools controlled independently

- Per-user chat isolation

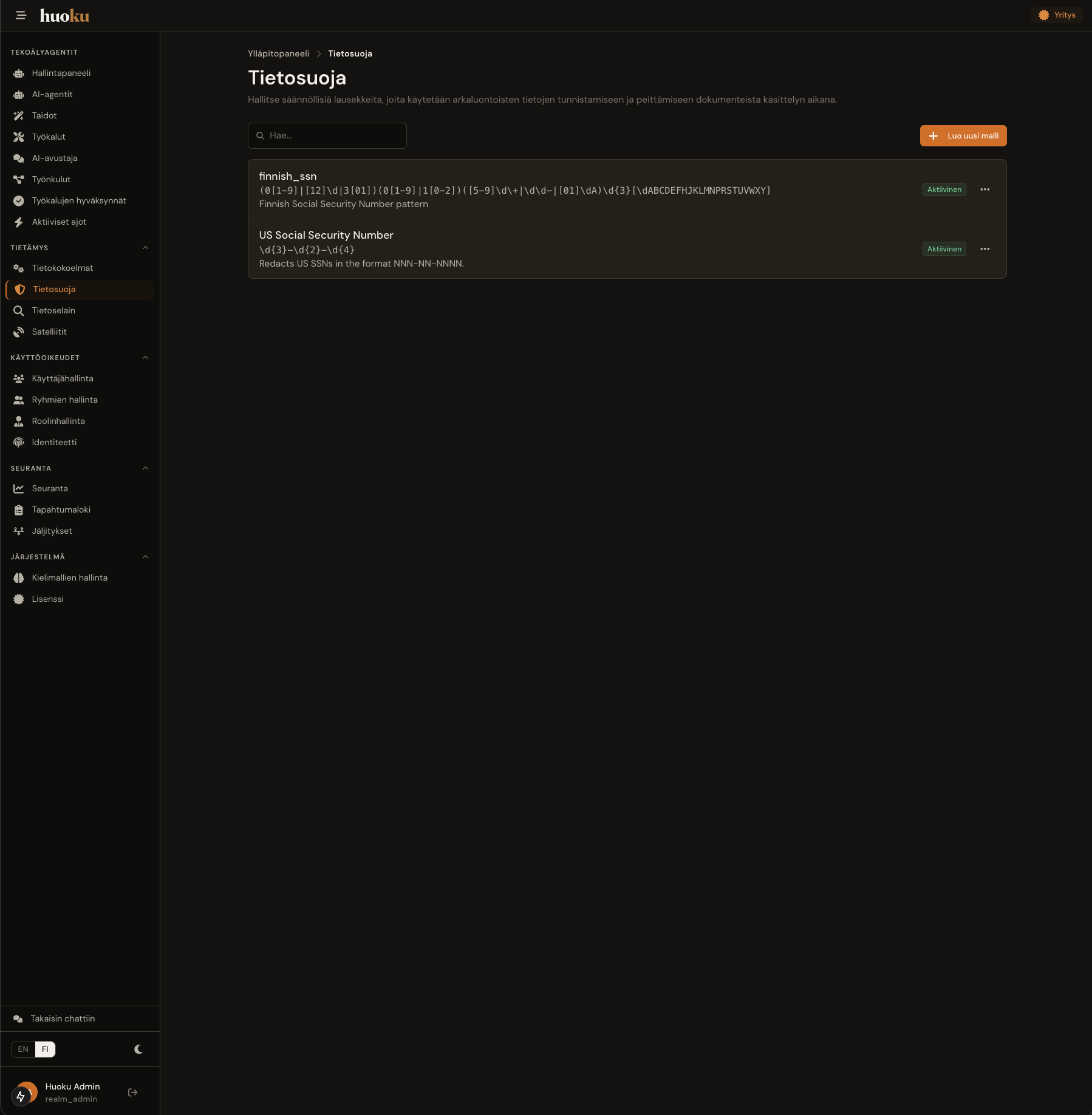

Your data stays under your control

- DataGuard PII masking in the knowledge pipeline — sensitive identifiers never enter the index

- Data never leaves your network — all processing on your own infrastructure

- Permissions follow the data from source system to final answer

- No backend service directly exposed to the internet

Every step, auditable

- GDPR-ready with audit logging

- Full audit trail of all agent activity, tool invocations, and search queries

- Air-gapped deployment option with local models

- EU AI Act requirements satisfied at the structural level

AI on your own infrastructure.

On your terms.

Tell us briefly about your environment and your goals — we'll come back within two business days with a concrete next step.